前言

书接上文,上一小节简单介绍了一元回归的基本原理、使用方式,作为运维,实践才是最重要的,那本小节就来实践一下我们之前的话题:探索cpu与qps的关系

获取数据

1. cpu数据

由于我的监控数据在阿里云的prometheus上面,并且阿里云也提供了一种查询方式,通过本地搭建的prometheus的remote_read功能,读取远端阿里云的数据

-

搭建本地的prometheus

-

准备配置文件

▶ cat ~/workspace/prometheus/docker/prometheus.yml # my global config global: scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute. # scrape_timeout is set to the global default (10s). # Alertmanager configuration alerting: alertmanagers: - static_configs: - targets: # - alertmanager:9093 # Load rules once and periodically evaluate them according to the global 'evaluation_interval'. rule_files: # - "first_rules.yml" # - "second_rules.yml" # A scrape configuration containing exactly one endpoint to scrape: # Here it's Prometheus itself. scrape_configs: # The job name is added as a label `job=<job_name>` to any timeseries scraped from this config. - job_name: "prometheus" # metrics_path defaults to '/metrics' # scheme defaults to 'http'. static_configs: - targets: ["localhost:9090"] remote_read: - url: "https://cn-beijing.arms.aliyuncs.com:9443/api/v1/prometheus/***/***/***/cn-beijing/api/v1/read" read_recent: trueremote_read可以参考 阿里云prometheus文档 -

docker启动

docker run --name=prometheus -d \ -v ./prometheus.yml:/etc/prometheus/prometheus.yml \ -p 9090:9090 \ -v /usr/share/zoneinfo/Asia/Shanghai:/etc/localtime \ prom/prometheus

-

-

搭建好之后通过prometheus接口来获取数据

def get_container_cpu_usage_seconds_total(start_time, end_time, step): url = "http://localhost:9090/api/v1/query_range" query = f'sum(rate(container_cpu_usage_seconds_total{{image!="",pod_name=~"^helloworld-[a-z0-9].+-[a-z0-9].+",namespace="default"}}[{step}s]))' params = { "query": query, "start": start_time, "end": end_time, "step": step } response = requests.get(url, params=params) data = response.json() return data我们获取了

helloworld这组pod在某个时间段所使用的cpu之和

2. qps数据

由于我的日志是托管在阿里云的sls,这里依然使用阿里云sls接口,直接把数据拉下来

训练模型

由于sls日志与prometheus涉及到一些敏感内容,我这里直接调用方法,就不展示具体代码了,prometheus的代码可以参考上述,sls日志的代码可以直接去阿里云文档里面模拟接口copy

from flow import get_cpu_data, get_query_data

from datetime import datetime

import pandas as pd

start_time = datetime.strptime('2025-03-21 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp()

end_time = datetime.strptime('2025-03-21 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

step = 60

sls_step = 3600

query = get_query_data(start_time, end_time, sls_step)

cpu = get_cpu_data(start_time, end_time, step)

print('cpu: ', len(cpu), cpu)

print('query: ', len(query), query)

运行看一下

每分钟获取一次数据,cpu与qps的数据分别打印出来,1440个(只显示了前10个,看下数据长什么样子就行了),这里一定要注意数量对齐

开始训练

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

from sklearn.metrics import mean_squared_error, r2_score

from flow import get_cpu_data, get_query_data

from datetime import datetime

import pandas as pd

start_time = datetime.strptime('2025-03-23 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp()

end_time = datetime.strptime('2025-03-25 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

step = 60

sls_step = 3600

query = get_query_data(start_time, end_time, sls_step)

cpu = get_cpu_data(start_time, end_time, step)

print('cpu 数据个数为{} ,前10数据为{}'.format(len(cpu), cpu[:10]))

print('query 数据个数为{} ,前10数据为{}'.format(len(query), query[:10]))

# 准备数据

data = {

'cpu': cpu,

'query': query

}

df = pd.DataFrame(data)

X = df[['query']]

y = df['cpu']

# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=88)

# 创建模型并训练

model = LinearRegression()

model.fit(X_train, y_train)

# 模型评估

y_pred = model.predict(X_test)

mse = mean_squared_error(y_test, y_pred)

r2 = r2_score(y_test, y_pred)

print(f"MSE: {mse}, R²: {r2}")

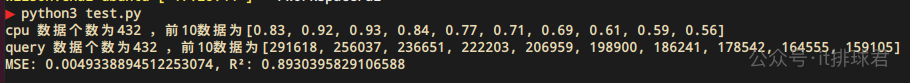

脚本!启动

训练是训练出来的,但是模型好烂啊!

- 我们获取的数据为3天的,3.23 — 3.25

- MSE高达0.04,我们之前说过,MSE有平方的计算,那实际误差是0.2,基本是20%–30%的水平了

- R²才0.48,该模型只能解释48%的数据

我训练了个寂寞啊,这模型太烂,必须要修正一下

修正模型

修正模型从检查数据开始,看下数据是否有异常。画图是最直观的检查方式,那就找一个开源的库来画图,用matplotlib来完成,安装也非常简单,pip3 install matplotlib

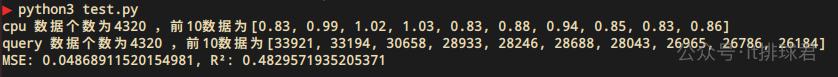

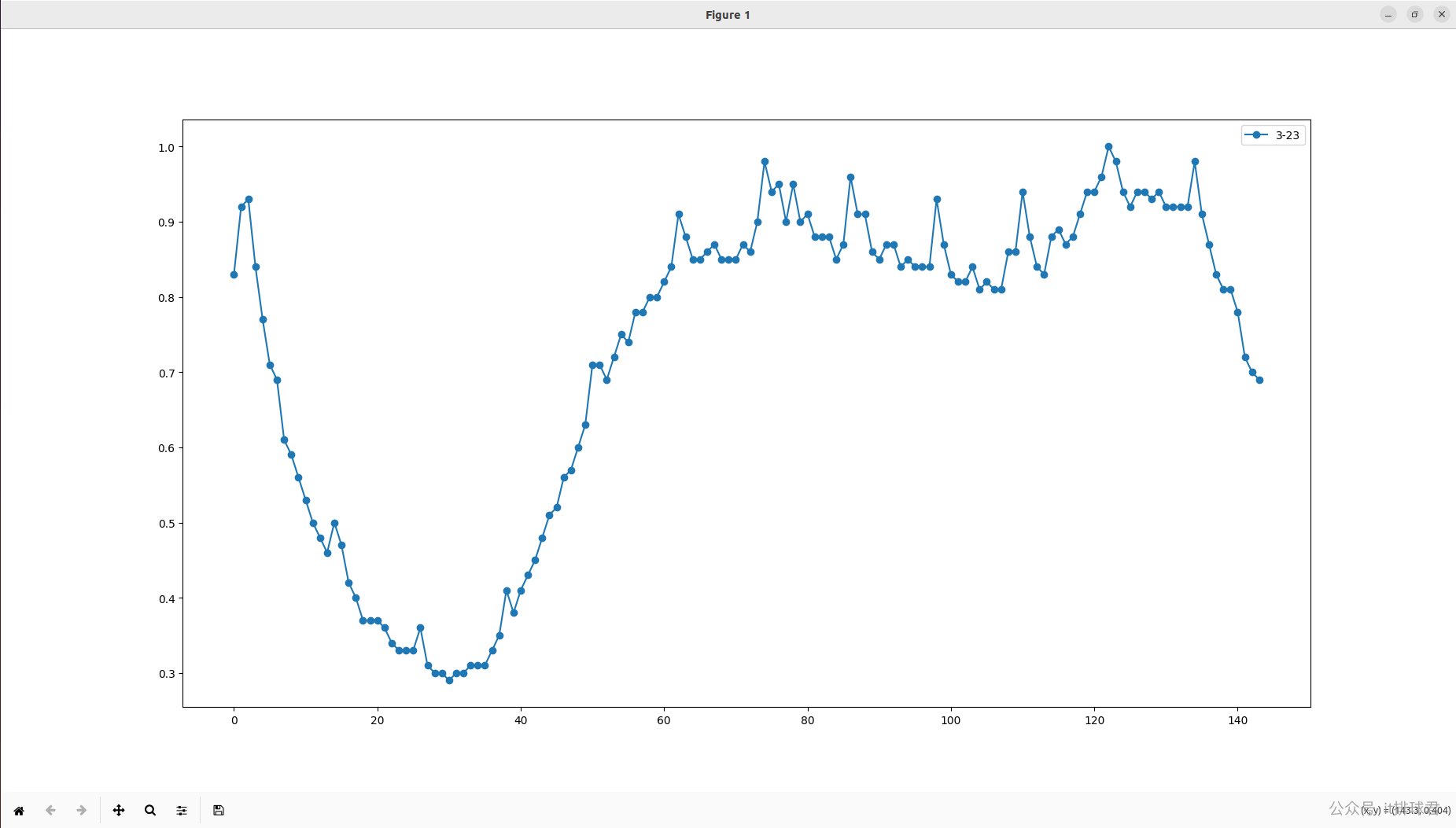

先拿一天的数据来看看,3.23

import matplotlib.pyplot as plt

from datetime import datetime

from flow import get_cpu_data

step = 60

sls_step = 3600

l = [

{

'name': '3-23',

'start_time': datetime.strptime('2025-03-23 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp(),

'end_time': datetime.strptime('2025-03-23 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

},

]

# 画多条线

for piece in l:

cpu = get_cpu_data(piece['start_time'], piece['end_time'], step)

plt.plot(cpu, marker='o', linestyle='-', label=piece['name'])

# 图例

plt.legend()

# 显示

plt.show()

脚本!启动

看起来数据很多,并且杂乱,并且cpu数据有个特性,会有激凸的特点,这对于数据收敛是不利的,所以,尝试把间隔时间调长一点,5分钟

step = 600

脚本!启动

看起来数据少了很多,并且离群激凸点没有了,尝试带入到训练脚本去试一试

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

from sklearn.metrics import mean_squared_error, r2_score

from flow import get_cpu_data, get_query_data

from datetime import datetime

import pandas as pd

start_time = datetime.strptime('2025-03-23 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp()

end_time = datetime.strptime('2025-03-25 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

step = 600

sls_step = 3600*6

query = get_query_data(start_time, end_time, sls_step)

cpu = get_cpu_data(start_time, end_time, step)

print('cpu 数据个数为{} ,前10数据为{}'.format(len(cpu), cpu[:10]))

print('query 数据个数为{} ,前10数据为{}'.format(len(query), query[:10]))

# 准备数据

data = {

'cpu': cpu,

'query': query

}

df = pd.DataFrame(data)

X = df[['query']]

y = df['cpu']

# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=88)

# 创建模型并训练

model = LinearRegression()

model.fit(X_train, y_train)

# 模型评估

y_pred = model.predict(X_test)

mse = mean_squared_error(y_test, y_pred)

r2 = r2_score(y_test, y_pred)

print(f"MSE: {mse}, R²: {r2}")

脚本!启动

哇哦,模型提升了

- MSE来到了0.004,误差为0.063左右,大约在5%–10%之间

- R²来到了0.89,解释了将近90%的数据,模型性能极大提升

为了再次提升性能,提高模型的泛化能力,需要继续内卷,卷起来!

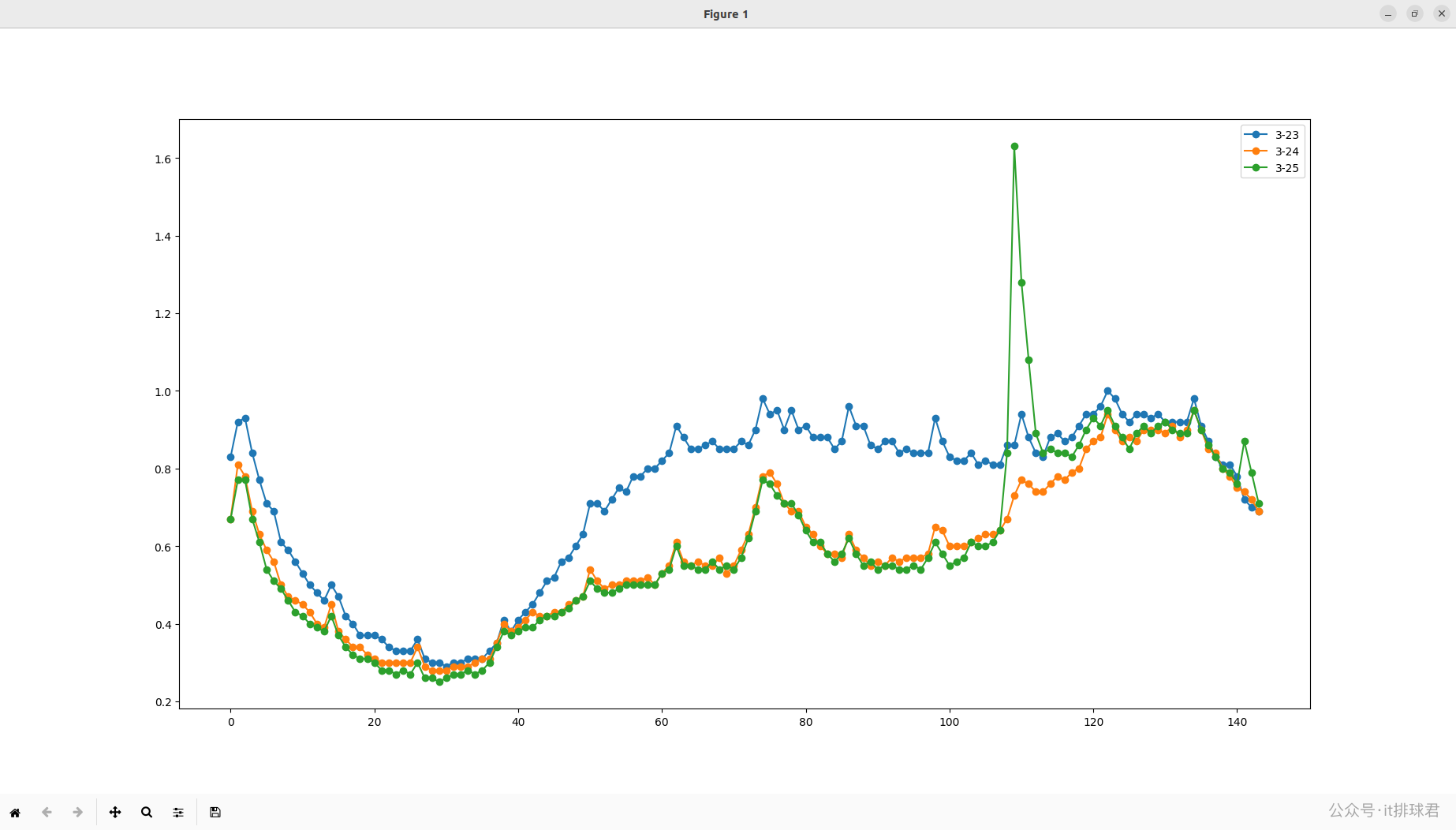

再次分析数据,由于有3天的数据,尝试将3天的数据对比作图,看看有什么收获

import matplotlib.pyplot as plt

from datetime import datetime

from flow import get_cpu_data

step = 600

l = [

{

'name': '3-23',

'start_time': datetime.strptime('2025-03-23 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp(),

'end_time': datetime.strptime('2025-03-23 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

},

{

'name': '3-24',

'start_time': datetime.strptime('2025-03-24 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp(),

'end_time': datetime.strptime('2025-03-24 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

},

{

'name': '3-25',

'start_time': datetime.strptime('2025-03-25 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp(),

'end_time': datetime.strptime('2025-03-25 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

}

]

# 画多条线

for piece in l:

cpu = get_cpu_data(piece['start_time'], piece['end_time'], step)

plt.plot(cpu, marker='o', linestyle='-', label=piece['name'])

# 图例

plt.legend()

# 显示

plt.show()

脚本!启动

找到你了!25号的数据,你是怎么回事,怎么突然间高潮了。我去找业务的同事询问,原来那一天系统发红包,大量用户在那个时刻都去领红包去了,造成了cpu异常

哦~原来如此,那一天属于异常数据,有两种方法,第一种就是用异常检测算法将异常点全部踢掉,由于我还不会异常检测算法(比如什么均方差、箱型图、孤立森林之类的);所以我使用了第二种方法,放弃3.25的数据, =_=!

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

from sklearn.metrics import mean_squared_error, r2_score

from flow import get_cpu_data, get_query_data

from datetime import datetime

import pandas as pd

start_time = datetime.strptime('2025-03-23 00:00:00', '%Y-%m-%d %H:%M:%S').timestamp()

end_time = datetime.strptime('2025-03-24 23:59:59', '%Y-%m-%d %H:%M:%S').timestamp()

step = 600

sls_step = 3600*6

query = get_query_data(start_time, end_time, sls_step)

cpu = get_cpu_data(start_time, end_time, step)

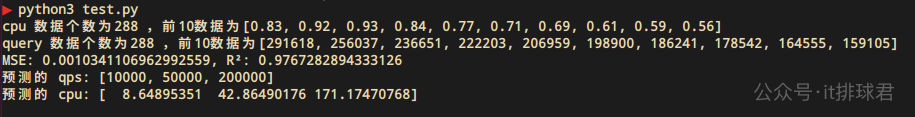

print('cpu 数据个数为{} ,前10数据为{}'.format(len(cpu), cpu[:10]))

print('query 数据个数为{} ,前10数据为{}'.format(len(query), query[:10]))

# 准备数据

data = {

'cpu': cpu,

'query': query

}

df = pd.DataFrame(data)

X = df[['query']]

y = df['cpu']

# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=88)

# 创建模型并训练

model = LinearRegression()

model.fit(X_train, y_train)

# 模型评估

y_pred = model.predict(X_test)

mse = mean_squared_error(y_test, y_pred)

r2 = r2_score(y_test, y_pred)

print(f"MSE: {mse}, R²: {r2}")

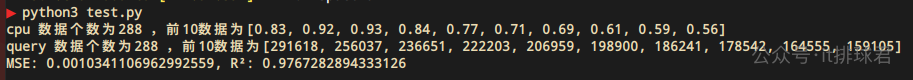

真是一场酣畅淋漓的战斗

- MSE来到了0.001,误差是0.03,大约在5%以内

- R²来到了0.976,差不多98%的数据都能够被解释了

这个模型已经很优秀了,来预测一把

# 预测

predicted_query = [10000, 50000]

new_data = pd.DataFrame({

"query": [x*300 for x in predicted_query]

})

predicted_qps = model.predict(new_data)

print("预测的 qps:", predicted_query)

print("预测的 cpu:", predicted_qps)

这里要注意,query是5分钟内访问的总和,所以如果qps是1w,需要qps*300,才是5分钟的query

脚本!启动

装杯时刻

- 老板:我们的系统能够承受什么样的压力?

- 牛马:我们系统日常qps在5w左右,峰值qps在20w左右,消耗的cpu分别为42和172,按照我们4c8g的云服务器,分别需要11台和44台,现在我们使用的服务器为100台,可以缩减服务器成本大约50%

- 老板:牛批,节约下来的钱请你吃一顿麻辣烫

- 牛马:谢谢老板栽培

联系我

- 联系我,做深入的交流

至此,本文结束

在下才疏学浅,有撒汤漏水的,请各位不吝赐教…